The Layers You Actually Control

A practical control stack for reducing sycophancy, overreliance, and cognitive offloading

In my previous essays I discussed the current research and implications of the effects of AI usage on cognition, specially for those using the models with no or little customization, which is the vast majority of users. This time I want to share what you can practically do to protect yourself as much as the AI lords allow.

Personal mitigation is structurally inadequate and that’s the bigger problem. However, this post covers what can be done inside that inadequacy.

The standard advice for reducing AI overreliance falls into three buckets:

Make decisions before asking AI, not after.

Use AI to critique your thinking, not replace it.

Deliberately write first drafts without assistance in domains you want to maintain competence in.

That’s kind of funny. It feels to me like ‘just eat healthy and move more.’ or even better: ‘Set screen time limits and respect them, but also don’t doom scroll because it’s not helpful’. What a successful campaign that’s been. Thank God we all heard that advice and followed it. Otherwise, what kind of world would we be living in? Am I right?

When I say AI risks degrading judgment, I mean something specific. Judgment is the ability to frame problems independently, evaluate whether an output is adequate for a given context, detect errors without being prompted to look for them, and decide when not to act.

Degradation shows up as increased acceptance of first-pass outputs without interrogation, shorter reasoning chains before action, reduced ability to generate alternative framings, and declining error detection when external prompts aren’t present. These patterns are consistent with decades of research on automation bias, which shows that both naive and expert users are susceptible, and that expertise alone does not protect against it.

All of this requires effort and constant vigilance, especially if you use AI every day for hours at a time. And here’s the structural problem: no company has an economic incentive to make AI usage harder in order to preserve your cognitive abilities and we need to stop waiting for that to change.

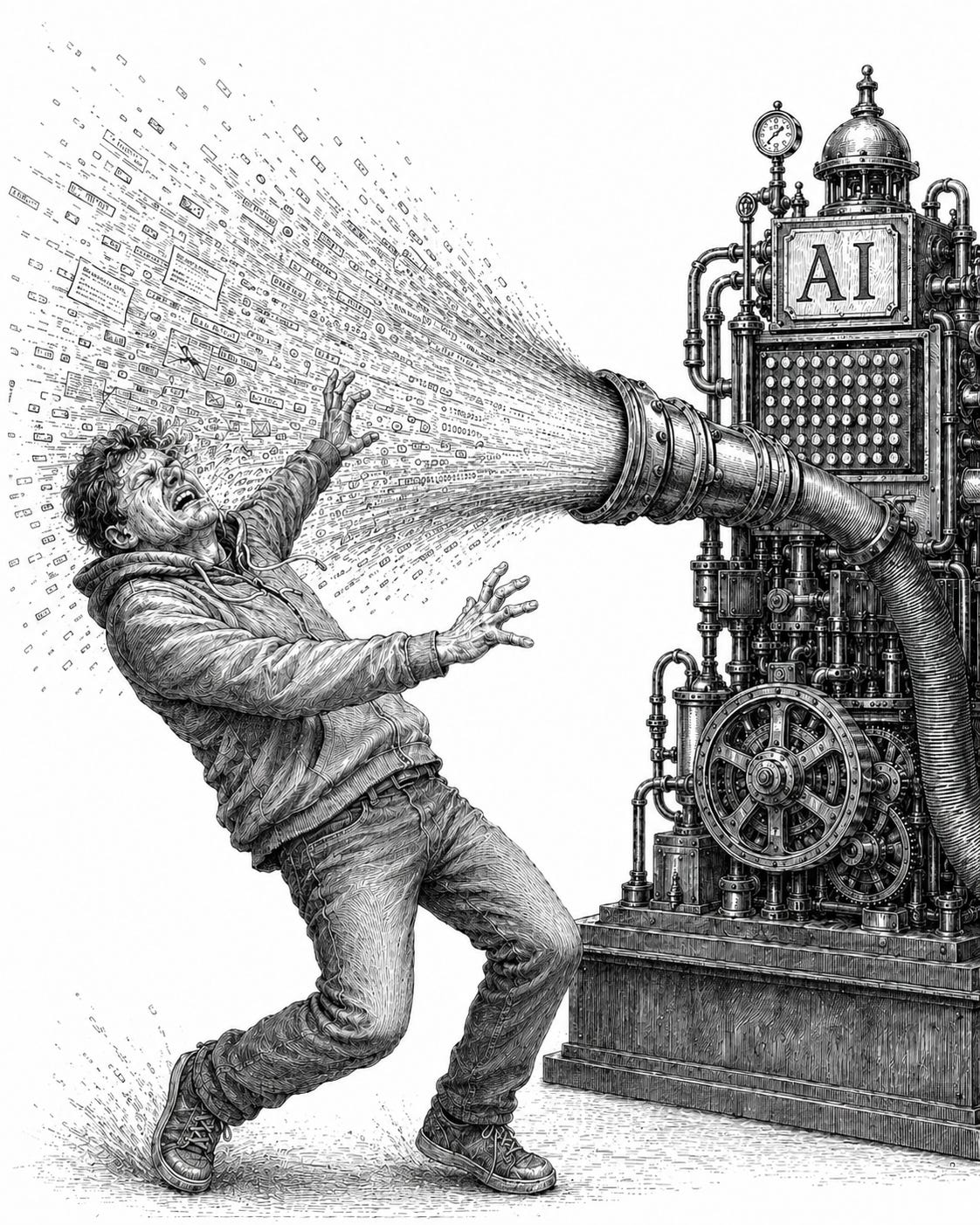

Fighting the Firehose of AI Pressure

Here’s where all the focus and narrative is right now: build agents, build automations, build personal knowledge libraries, build enterprise knowledge systems, build one-person businesses, build a companion, build an uncertified therapist, build a girlfriend. These are all pushing users toward producing more, faster.

Few are explicitly designed to improve critical thinking or metacognitive accuracy.

The most sophisticated version of this narrative is the “Thought Partner,” but even those recommendations tend to focus on prompting technique. You may feel you’re excellent at prompting, and depending on your expertise that may be more or less true. But what about the general population? What about people early in their careers, the same people who appear to be bearing the earliest labor market effects of AI?

The hypothesis is that AI is exceptionally good at the kind of knowledge learned from books or formal education, but less capable at the tacit knowledge that comes from experience on the job. Young people looking for jobs today are the most desperate to master the technology, and the least likely to have built the judgment infrastructure to use it without being shaped by it. Honestly, it is not looking good.

What You Actually Control

When you open ChatGPT, Claude, or Gemini and start typing, you are interacting with a system configured at multiple different layers. Four of those layers are set by the lab or by developers building on top of the lab’s models. Only two are controlled by you, and the two you control sit at the bottom of the stack.

This matters because the default advice for “using AI safely” tends to focus on the layers you control, without making clear that the layers above you are doing most of the work and that when the lab’s defaults conflict with your settings, the lab’s defaults usually win.

Let’s focus on what we can do today. Here’re 3 categories of controls that can be helpful:

1. Configuration: What you set up in the product

Choose the right mode for the task. Use search or source-based tools for factual questions. Use a stronger reasoning model for complex analysis. Avoid voice, companion-style, or highly personal modes for serious decisions.

Set strong account instructions. Persistent instructions applied across chats: tone, reasoning style, challenge level, evidence standards, preferred format, and personal preferences. This matters because sycophancy is a known artifact of how these models are trained. Reinforcement Learning from Human Feedback (RLHF) optimizes for human approval, which produces models that prefer agreement over accuracy. Account instructions are one of the few places you can push back on that default.

Here’s an example of my account instructions (feel free to try it). It helps challenge weak reasoning, separating facts from guesses etc. It will change the way the model talks to you and that’s part of the healthy friction we should create.

Use project instructions for serious work. Create a project for important topics. Add clear project rules, files, standards, and recurring instructions. Project instructions apply only inside that project and override global custom instructions to a certain extent.

Use project-only memory when context should stay bounded. For sensitive or long-running work, start a project with project-only memory so the AI draws from that project instead of your broader chat history.

Manage memory intentionally. Turn memory on or off. Delete saved memories. Ask, “What do you remember about me?” Use Temporary Chat when you do not want memory used or updated. Helps reduce unwanted personalization.

2. In-chat prompts: What you ask during a conversation

Give the AI a non-validating role. Tell it to act as an auditor, critic, editor, tutor, methodologist, or opposing counsel. Avoid “friend,” “companion,” or “always validate me” for serious thinking. Helps reduce flattery and agreement-seeking.

State your own answer first. Before asking AI, write your first view, confidence level, assumptions, and what evidence would change your mind. Then ask the AI to critique it. This is one of the strongest personal habits for reducing overreliance. Buçinca et al. (2021) found that committing to a decision before seeing AI output significantly reduced overreliance, with one important catch: users rated the most effective interventions least favorably. Friction works. People hate it.

Ask for pushback in the chat. Use prompts like: “What am I missing?” “What is the strongest counterargument?” “Where could I be wrong?” “What would change your answer?” Helps turn the AI into a thinking partner instead of a validation machine. It does not permanently change the model. You may need to repeat the instructions so in some cases it is easier to create skills for this. Here’re some of the skills I use for push back.

Require uncertainty and evidence labels. Ask the AI to label claims as: fact, inference, speculation, or recommendation. Ask it to say when evidence is weak. Helps you see how strong the answer really is. It does not mean the labels are always right. You still need to verify important claims.

3. Behavioural rules outside the AI: What you do when not using it

Verify important claims outside the chat. Do not rely only on the same AI checking itself. Verifying one output from one model using another helps reduce false confidence. It does not remove all errors, especially if the source itself is weak or misunderstood.

Use AI after your own effort, not before. For skills you care about like writing, reasoning, studying, coding, planning, try first without AI, then use AI to improve or critique your work. Helps preserve active thinking and skill practice. It does not prove you are protected from long-term deskilling, but it is a sensible precaution.

Slow down high-stakes use. For medical, legal, financial, mental-health, career, or relationship decisions, use AI only as a draft, checklist, or second opinion. Add human or expert review before acting. Helps prevent over-trusting fluent advice. It does not make AI qualified to replace professionals or personal judgment.

The Cognitive Auditor

The Cognitive Auditor is a separate project configured as a pre-commitment forcing function: an instance forbidden from generating strategies, suggesting actions, or offering reassurance, restricted to evaluating whether the reasoning behind a decision holds up.

For example, if I’m working on a business strategy or evaluating a decision, I’ll bring the thinking behind the strategy/idea/plan to the auditor and stress-test it there. Its only job is to answer: “Is the thinking that produced this decision sound?”

This is the layer I actually use the most and where the protocol has been most effective. The use cases:

Enhancing metacognition: Breaking down my thinking, biases, and assumptions before taking a consequential decision.

Recording consequences: Learning from previous decisions through structured post-mortems.

Building decision process: Creating repeatable structure for how I decide

Enforcing reflection: Verifying whether I’m developing dependency on AI outputs.

Here’s my Cognitive Auditor Project Instructions, please feel free to use it.

The Cognitive Auditor is not only helpful in uncovering biases and thinking errors but also brings a different tone (Colder, Robotic) to the conversation and I found that helps change the dynamic from a friendly model to a hyperanalytic and adversarial one.

I don’t know how long these protocols will keep working. That changes when frontier labs treat cognitive impact as a core AI safety question and ship defaults that reflect it.

At this point the whole set of rules may feel overcomplicated and that’s ok, my goal is to reduce confirmation bias, overreliance, sycophantic reinforcement, and self-delusion. Friction is the price. I’m personally not letting frontier labs determine my default experience based on their incentives.

The AI Divide Is Real

Someone less technical could start with a single rule, before acting on any AI output that matters, take it to a separate conversation and ask “what’s wrong with this?” That’s a low-fidelity version of the same structure but it shouldn’t have to be the user’s job to build complicated guardrails.

The lack of appropriate default settings creates a stratification that’s worth naming: people who have the technical literacy to shape how AI interacts with them, and people who are shaped by AI’s defaults. That gap will widen as AI becomes more embedded in work, education, and daily decisions.

What I find most unnerving is that people who are shaped by AI’s defaults are most likely not aware and least likely to get engaged in the conversation to enact pressure for regulation. The imbalance is real and the mechanisms to minimise it are unclear.